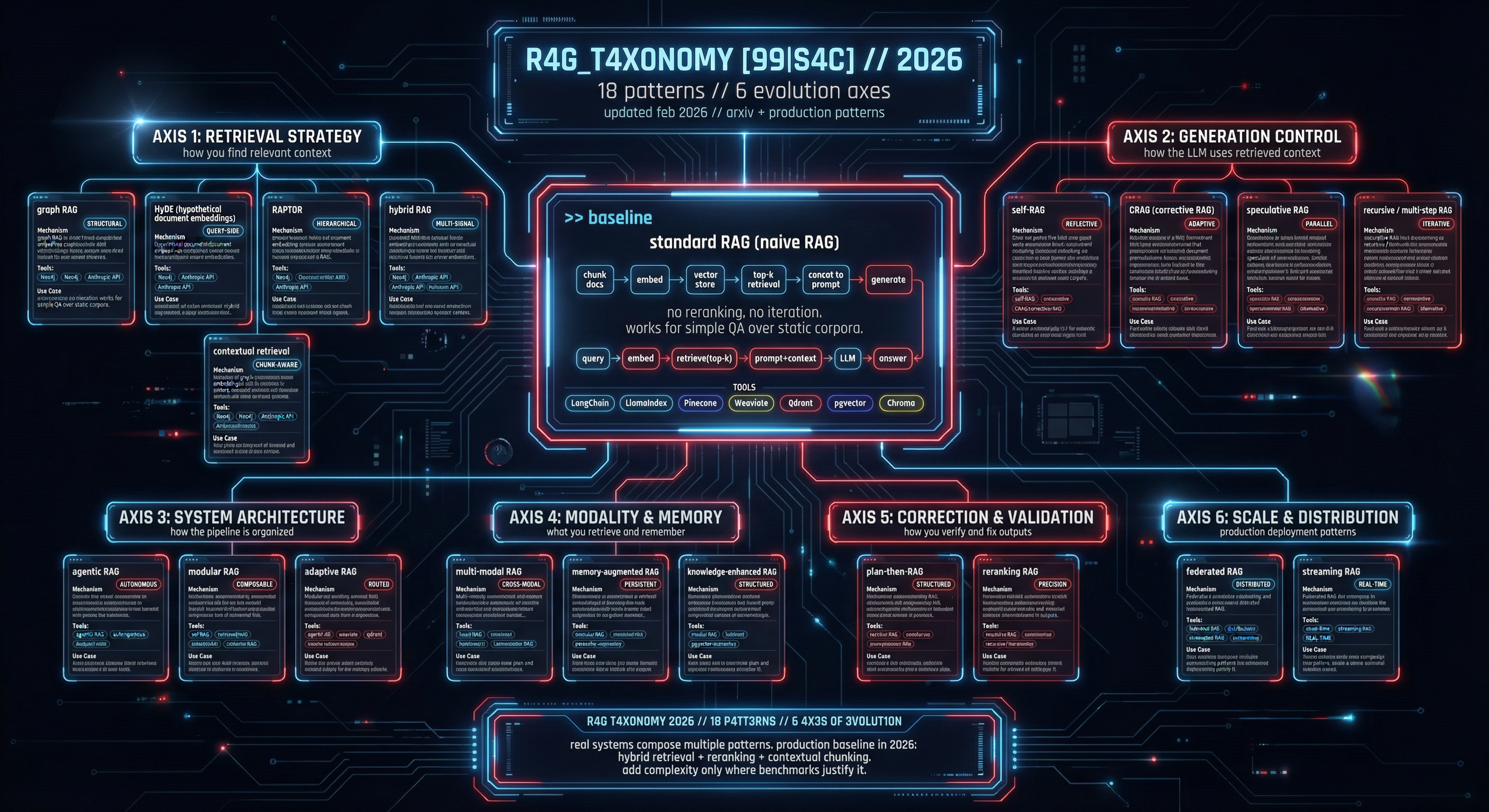

[99|S4C] R4G_T4X0N0MY

updated feb 2026 // arxiv + production patterns

>> baseline

standard RAG (naive RAG)

mechanism: chunk docs -> embed -> vector store -> top-k retrieval -> concat to prompt -> generate. no reranking, no iteration. works for simple QA over static corpora.

query -> embed -> retrieve(top-k) -> prompt+context -> LLM -> answer

LangChain

LlamaIndex

Pinecone

Weaviate

Qdrant

pgvector

Chroma

>>

retrieval strategy

how you find relevant contextgraph RAG

STRUCTURAL

knowledge graph as retrieval substrate. entities + relations replace flat chunks. enables multi-hop reasoning across connected nodes. community detection for global summarization.

Neo4j

Microsoft GraphRAG

LlamaIndex KG

Apache Jena

multi-entity QA, legal discovery, biomedical literature

HyDE (hypothetical document embeddings)

QUERY-SIDE

LLM generates hypothetical answer -> embed that instead of query. bridges vocabulary mismatch between questions and documents. zero-shot; no labeled data needed. cost: extra LLM call per query.

LangChain HyDE

LlamaIndex

custom pipeline

technical docs, cross-domain search, ambiguous queries

RAPTOR

HIERARCHICAL

tree-structured retrieval: cluster chunks -> summarize clusters -> recursive tree. query matches at multiple abstraction levels. handles both specific detail and thematic questions from same corpus.

RAPTOR (Stanford)

LlamaIndex TreeIndex

long documents, book-level QA, research synthesis

hybrid RAG

MULTI-SIGNAL

dense vectors + sparse retrieval (BM25/TF-IDF) fused via reciprocal rank. dense catches semantics; sparse catches exact terms. outperforms either alone on most benchmarks. standard in production.

Elasticsearch + vector

Weaviate hybrid

Qdrant sparse

ColBERT

production search, e-commerce, enterprise QA

contextual retrieval

CHUNK-AWARE

prepend document-level context to each chunk before embedding. anthropic's approach: LLM generates 50-100 token context prefix per chunk. reduces retrieval failures 49% (anthropic benchmark). combine with BM25 for 67% improvement.

Anthropic API

custom pipeline

LlamaIndex

any chunked corpus where chunk isolation hurts retrieval

>>

generation control

how the LLM uses retrieved contextself-RAG

REFLECTIVE

LLM decides when to retrieve, then self-critiques output with special tokens. trained on reflection tokens: [Retrieve], [IsRel], [IsSup], [IsUse]. skips retrieval when unnecessary. 5-20% accuracy gains on knowledge-intensive tasks.

Self-RAG (UW)

Hugging Face models

open-domain QA, fact verification, citation-heavy tasks

CRAG (corrective RAG)

ADAPTIVE

evaluator scores retrieval quality -> three paths: correct (use docs), ambiguous (refine + web search), incorrect (web search only). lightweight retrieval evaluator triggers correction before generation. robust to noisy retrieval.

LangGraph

custom pipeline

Tavily Search

production QA with unreliable corpora, mixed-source systems

speculative RAG

PARALLEL

small LM generates multiple draft answers from different doc subsets -> large LM verifies best. drafting parallelized across doc partitions. reduces latency (large model sees only candidates, not all docs). accuracy: comparable to full-context.

custom pipeline

vLLM (small model)

low-latency production, cost-sensitive large-context tasks

recursive / multi-step RAG

ITERATIVE

chain of retrieval-generation loops. each step refines query using previous output. decomposes complex questions into sub-queries. each iteration sharpens context window. tradeoff: latency scales linearly with steps.

LlamaIndex sub-question

DSPy

LangGraph

multi-hop reasoning, comparative analysis, research synthesis

>>

system architecture

how the pipeline is organizedagentic RAG

AUTONOMOUS

agent decides: which tools, when to retrieve, how to combine. LLM as orchestrator over retrieval tools, APIs, calculators, code execution. planning + memory + tool selection. genuine decision loops, not just retrieve-then-generate.

LangGraph

CrewAI

AutoGen

OpenAI Assistants

complex research, multi-source analysis, autonomous workflows

modular RAG

COMPOSABLE

pipeline as interchangeable modules: retriever, reranker, compressor, generator, router. swap components without rewriting. enables A/B testing individual stages. DSPy-style programmatic optimization of each module.

DSPy

Haystack

LlamaIndex pipelines

production systems needing iterative optimization

adaptive RAG

ROUTED

classifier routes query to optimal strategy: no retrieval | single-step | multi-step. simple queries skip retrieval entirely. complex queries get iterative pipeline. reduces unnecessary latency and cost on easy questions.

LangGraph

custom classifier

DSPy

high-throughput QA with mixed query complexity

>>

modality & memory

what you retrieve and remembermulti-modal RAG

CROSS-MODAL

retrieve across text, images, tables, audio, video. unified embedding space (CLIP/SigLIP) or modal-specific retrievers with fusion layer. table extraction via layout models. image captioning as retrieval bridge.

LlamaIndex multi-modal

Unstructured.io

ColPali

GPT-4V/Gemini

document AI, medical imaging + reports, video search

memory-augmented RAG

PERSISTENT

external memory store persists across sessions. conversation history, user preferences, learned facts stored in retrievable memory. long-term: vector store. short-term: buffer. episodic + semantic memory layers.

Mem0

Zep

LangGraph memory

Redis

chatbots, personal assistants, long-running agent sessions

knowledge-enhanced RAG

STRUCTURED

structured knowledge (ontologies, schemas, domain rules) injected alongside retrieved text. combines unstructured retrieval with structured reasoning constraints. reduces hallucination in domain-specific contexts.

SPARQL endpoints

Wikidata

domain ontologies

legal, medical, financial compliance QA

>>

correction & validation

how you verify and fix outputsplan-then-RAG

STRUCTURED

generate retrieval plan before executing any search. LLM decomposes question -> identifies information needs -> plans retrieval sequence -> executes. avoids irrelevant retrieval and ensures coverage.

DSPy

custom pipeline

LangGraph

complex research questions, multi-source investigations

reranking RAG

PRECISION

cross-encoder reranks initial retrieval results before generation. first-stage: fast bi-encoder (top-100). second-stage: slow cross-encoder (top-k from 100). dramatically improves precision@k. near-universal in production.

Cohere Rerank

ColBERT

bge-reranker

Jina Reranker

any production RAG system (should be default)

>>

scale & distribution

production deployment patternsfederated RAG

DISTRIBUTED

retrieval across decentralized data sources without centralizing data. privacy-preserving: data stays at source. aggregation layer merges results. critical for healthcare, finance, multi-org collaborations.

Flower

PySyft

custom federation

cross-hospital research, multi-bank compliance, consortium QA

streaming RAG

REAL-TIME

continuous index updates from live data streams. new documents indexed on ingest, not batch. handles time-sensitive queries where stale data = wrong answers. event-driven architecture.

Kafka + vector DB

Apache Flink

Milvus streaming

news, financial data, social monitoring, fraud detection