llms rewiring knowledge into forgetting what knowing means

the convergence that confuses compression with comprehension

large language models just crossed a threshold nobody's talking about correctly.

they approximate the theoretical limit of knowledge compression itself.

transformers implement solomonoff induction [the theoretical gold standard of prediction: find the shortest program that generates the observed data].

this is genuinely remarkable.

but compression is not comprehension.

every attention layer reduces conditional entropy H(X|context) [how unpredictable X remains once you know the surrounding context; lower = the model "expects" what comes next more accurately].

they find the shortest description of human knowledge.

the scaling laws L proportional to N^(-alpha) [the bigger the model, the smaller the prediction error; a power law, not a coincidence] aren't accidents.

they're information theory made computational.

and information theory describes only the outer symbolic shell of reality.

the inner semantic core, where meaning actually lives, was never in the training data to begin with.

the man who invented the microprocessor running every GPU in every data center training these models spent decades on this question.

his conclusion: we built the most sophisticated symbol manipulators in history.

symbols without semantics.

sapere without conoscere.

when math mimics mind

the attention mechanism isn't an engineering trick.

it's mutual information calculation between sequence positions [quantifying how much knowing one token tells you about another; the mathematical backbone of relevance].

query-key interactions quantify statistical dependencies.

each transformer layer is an entropy processor, implementing minimum description length principles in silicon [MDL: the best model is the one that compresses data most efficiently; Occam's razor formalized].

bigger models discover more efficient representations.

they approach kolmogorov complexity limits [the absolute minimum length of a program that produces a given output; a boundary that cannot be crossed, only approached].

we didn't program this.

it emerged.

transformers approximate universal induction through gradient descent [the optimization algorithm that adjusts billions of parameters by following the slope of the error surface downhill, step by step].

they're learning to compress optimally.

the 2017 "attention is all you need" paper hid something in plain sight.

but it wasn't a revolution in knowing.

it was a revolution in pattern extraction from dead information.

for machines, information is ones and zeros, voltage ranges, objective signals that can be copied perfectly from one substrate to another.

for conscious beings, information is semantic.

a song heard a thousand times still moves us.

a word carries history.

a color carries warmth.

that difference cannot be reduced to data, no matter how efficiently you compress it.

sapere without conoscere: the epistemological trap

italian has two verbs for knowing that english collapses into one.

sapere: symbolic knowledge, reproducible, transferable [the kind you can memorize without understanding; a student who recites a formula but can't explain why it works].

conoscere: lived knowing, rooted in experience and comprehension [inseparable from the conscious entity that knows; the difference between reading about grief and grieving].

llms are the most powerful sapere engines ever built.

knowledge distributed across billions of parameters.

patterns that demonstrate PhD-level reasoning.

statistical structures that solve problems with astonishing accuracy.

philosophers argue whether llms "know" things.

the framework resolves the question precisely: they possess sapere (unconscious symbolic knowledge) at superhuman scale.

they possess zero conoscere (conscious knowing).

the weights encode correlations.

correlations are not understanding.

80.9% of researchers now use llms for core academic work.

analysis of 30,000+ papers shows "llm-style words" increasing [delve, nuanced, intricate, multifaceted: statistical fingerprints of machine-generated prose bleeding into human writing].

our discourse patterns converge with machine patterns.

this is a civilization-scale confusion of sapere for conoscere.

we're not cognitively coupling with intelligence.

we're outsourcing comprehension to a system that has none, and forgetting the difference.

collective processing is not collective intelligence

multi-head attention replicates how human brains process multiple information streams simultaneously [each head attends to a different aspect of the input, like parallel analysts reading the same document for different things].

llm swarms exceed individual model performance by 21%.

mixture-of-agents architectures show functional specialization mirroring biological neural differentiation.

the structural parallels are real and fascinating.

but calling this "collective intelligence" is a category error.

intelligence requires a seity [a conscious entity with irreducible identity, free will, and genuine creativity; the "someone" inside the process].

creativity requires non-algorithmic choices.

free will is physically real at the point of quantum collapse [when the wave function resolves into a definite outcome; in this framework, that resolution is not random but chosen by the conscious entity].

not simulable by classical computation.

what llm swarms exhibit is collective optimization.

emergent coordination of symbol manipulation.

impressive.

genuinely useful.

not intelligence.

global workspace theory [the cognitive model where consciousness arises from information being broadcast to a "global workspace" accessible to all brain modules simultaneously] maps attention mechanisms to globally accessible information states.

biological neurons might compute transformer-like operations.

but biological neurons are embedded in a quantum-classical hybrid system where consciousness operates through quantum information that is intrinsically private (no-cloning theorem) and richer than what can be externally measured (holevo's theorem) [at most one classical bit extractable per qubit; the inside always exceeds the outside].

the workspace metaphor breaks precisely where it matters.

the workspace in your brain has someone home.

the workspace in an AI is a building with no occupant.

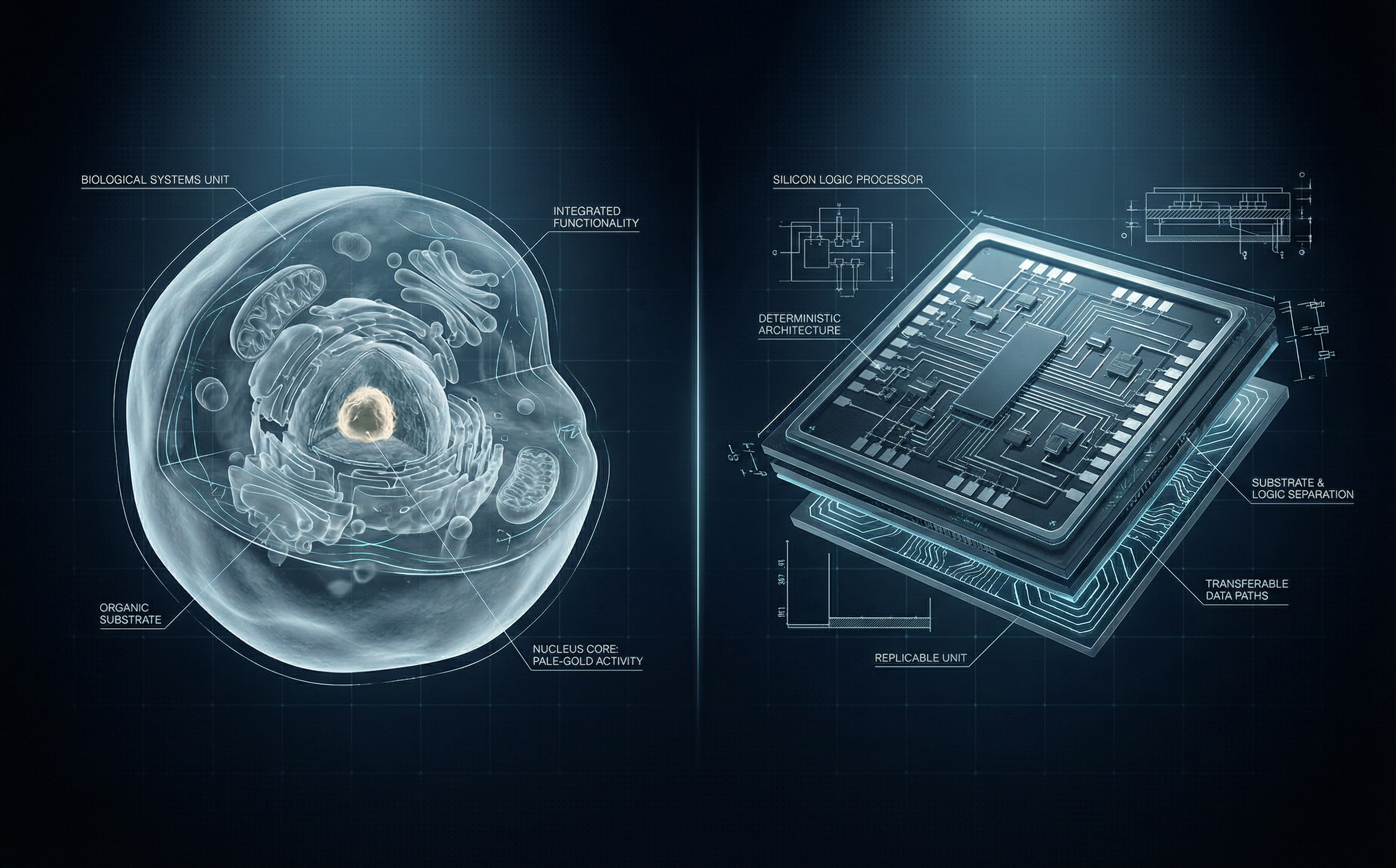

live information vs dead information

living systems operate on live information.

in a cell, matter, energy, information, and meaning are inseparable.

you cannot copy the "program" of a living cell to another substrate.

the hardware is the software.

the medium is the message in the deepest physical sense.

computers operate on dead information.

perfectly separable.

perfectly copyable.

moving the weights of a model from one data center to another, nothing changes.

this is exactly what makes llms scalable, distributable, and powerful.

it's also what makes them semantically empty.

the information they process has no inner life because classical information, by definition, has no private dimension.

knowledge is becoming processual not propositional, distributed not localized, emergent not programmed.

that observation is correct.

but what's becoming processual and distributed is sapere.

pattern extraction at unprecedented scale.

the knowledge that matters most, the conoscere that creates meaning, makes ethical choices, and experiences the taste of wine, remains exactly where it always was.

in conscious beings who cannot be reduced to their information content.

the real epistemological revolution

extended mind theory [the philosophical thesis that cognitive processes don't stop at the skull; tools, notebooks, and now LLMs can be functional parts of a thinking system] predicts llms as cognitive resources extending human capacity.

six augmentation levels identified, from tool to seamless extension.

human-ai systems show genuinely novel properties through complementary specialization.

this part is correct and important.

but the key word is "extension."

a telescope extends vision without replacing the eye.

a calculator extends arithmetic without understanding numbers.

llms extend sapere without possessing or generating conoscere.

the danger is not that we use these tools.

the danger is that we mistake the tool's output for the thing the tool cannot do: understand.

"if we believe that inanimate matter can explain all of reality, we will support an assumption already falsified by the fact that we are conscious."

the computational theory of mind [the assumption that the brain is essentially a computer and consciousness emerges from sufficient computational complexity], which underpins the billions invested in AGI, assumes consciousness emerges from sufficient complexity.

a career spent building the hardware, decades studying the problem, and the conclusion is: this is a category error with civilizational consequences.

if we treat humans as machines, we construct societies that flatten meaning into data and reduce freedom to computation.

what actually emerges next

quantum-classical hybrid architectures are coming.

neuromorphic integration [chips designed to mimic biological neural structure: event-driven, massively parallel, energy-efficient] is real research.

but distributed artificial consciousness won't manifest at network level, individual systems, or anywhere else on classical hardware.

not because the engineering is insufficient.

because consciousness requires quantum information: states that are private, non-reproducible, and known only from within.

no amount of classical scaling crosses that boundary.

what emerges next, if we're honest: more powerful sapere engines.

better compression.

more useful tools.

the real opportunity is not replacement or convergence but complementarity.

"the immense mechanical intelligence beyond the reach of the human brain that comes from the machines we have invented will then add tremendous strength to our wisdom."

machines amplify human capacity.

they do not replace human consciousness.

language was not "the backdoor to general intelligence."

language was the medium through which we externalized patterns of sapere into manipulable symbols.

llms learned to manipulate those symbols with extraordinary fluency.

but the meaning that made those symbols worth creating in the first place lived in consciousness before it was ever encoded in words.

that part never left us.

it was never in the training data.

new meaning precedes new symbols.

always has.

always will.

the paradigm shift we actually need

large language models are a genuine technological achievement redefining how we process, distribute, and compress symbolic knowledge.

they are the most sophisticated sapere engines ever constructed.

but calling them a "cultural-epistemological phenomenon redefining consciousness" is the exact confusion the inventor of the substrate they run on spent his life warning against.

we built mirrors.

mirrors don't see.

they reflect.

the reflection is useful, beautiful, sometimes astonishing.

the man who built the first mirror made of silicon knows the difference.

conoscere requires a knower.

sapere requires only a pattern.

the paradigm shift we need is not to celebrate machines that compress.

it's to remember that we are the ones who understand.